With the advent of high-performance Ethernet network technology, such as 10 GbE and 40 GbE, more and more performance critical workloads are being migrated to such Ethernet-based IP networks. While traditionally this was the domain of (Fibre Channel based) SAN infrastructure in the past, more and more new workloads are being deployed on high-speed IP networks today. I receive an increasing number of questions on enhancing performance of solutions based on such IP networks. 10 Gb Ethernet being the de facto standard for performance critical environments today (with 40 GbE on the horizon), more and more applications exceed the bandwidth which a single link can provide. To further enhance performance in such situations multiple links can be combined into one logical connection. However, utilizing the full potential of this requires a deeper understanding of the available options. This is what I'm trying to explain in this article.

This article was previously published on Storageneers.

Background

Multipathing is a commonly used technique to improve availability and throughput in Fibre Channel SANs. If servers are connected with more than one physical link to a Fibre Channel SAN then multipathing ensures that all available links are in fact being used to transfer data. Should one link fail then the remaining link(s) keep the server connected with a reduced throughput.

In Ethernet / IP networks link aggregation (more specifically LACP) is commonly used to achieve this behavior. By default, LACP (802.3ad / bond mode 4) distributes sessions across all available Ethernet links which are also referred to as slave interfaces. A session is determined by source and target MAC address. This means that a single source server (MAC) connecting to a single target server (MAC) will only utilize a single slave (Ethernet link) — no matter how many slaves are available in the bond, and no matter how many sessions a client initiates.

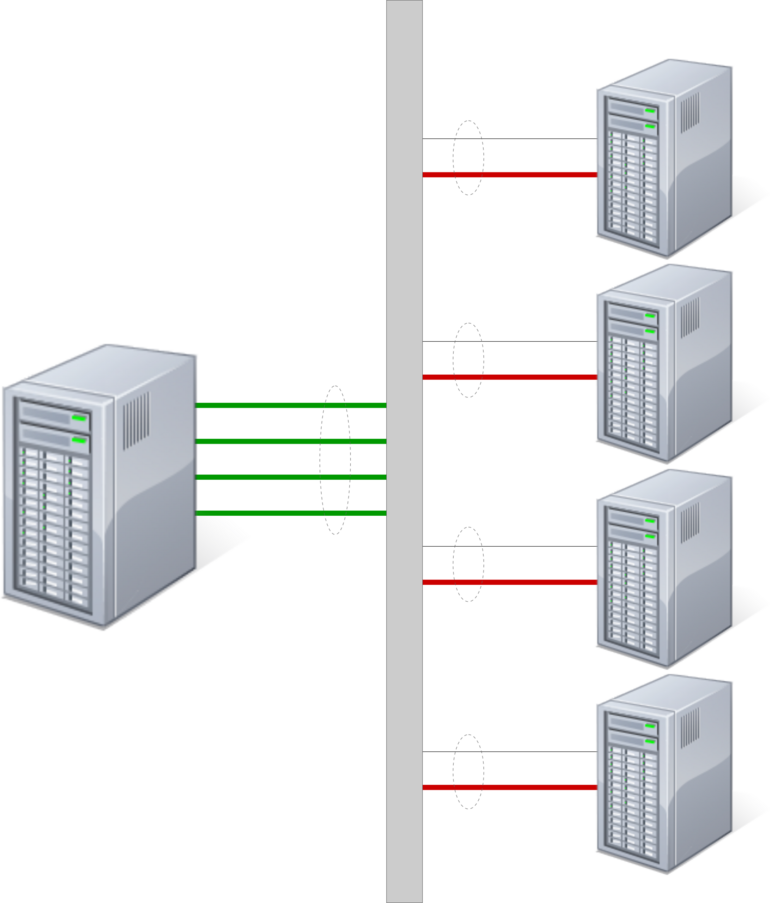

In situations where large numbers of clients connect to a single (storage) server configuring this server with a multi-port LACP bond makes a lot of sense. LACP will distribute the sessions (determined by the client MAC) across all available slaves in the bond of the server. This way a server can utilize 2, 4, or even 8 Ethernet ports uniformly, assuming that enough clients connect to the server (fan-in). The clients, however, will only utilize a single link — configuring LACP on this end of the connection does not provide too great value, unless clients connect to multiple storage servers in parallel (fan-out).

There are situations, however, in which only very few clients produce a great amount of data that needs to be transferred over Ethernet to a storage server. Examples for such configurations are backup servers, video surveillance gateways, streaming media servers and the like. Such clients can easily saturate a single Ethernet port (1 Gb, 10 Gb or 40 Gb — it doesn't really matter).

Introducing Transmit Hash Policy

To improve performance in such configurations the default LACP behavior as explained above can be tweaked. LACP has a configurable option named 'Transmit Hash Policy'. The hash policy determines the algorithm by which LACP distributes sessions across available slaves. The default hash policy ('layer 2') will distribute sessions based on source and target MAC address as explained above. An alternate hash policy is 'layer 2+3' which will also consider the IP address when distributing sessions. An Ethernet interface can be configured with more than one IP address and if the application / workload allows then data can be sent to multiple IP addresses in parallel. This configuration allows for utilizing more than one physical link for a connection between a given pair of servers...

Example with NFS Client and Server on Linux

The following example utilizes the NFS protocol to transfer data, but the underlying concepts apply to most IP-based protocols alike.

Imagine a NFS server is connected with two 10 GbE ports to a switch, and a single LACP bond is configured for both of these ports. A NFS client is also connected with two 10 GbE ports to the same switch. Again, LACP is configured on both ports. The server has a single IP address configured on the LACP bond interface, and the client mounts an export using this IP address. No matter how many parallel processes the client runs, it will be bound to a single 10 GbE link — which, in the case of NFS, will provide a maximum throughput of roughly 850 MB/s.

To improve performance of the NFS client in this configuration one could change the configuration of the LACP bond interface on both, client and server. In case of Linux one would add the following bonding options to the configuration of the bond interface:

/etc/sysconfig/network-scripts/ifcfg-bond0:

...

BONDING_OPTS="mode=802.3ad miimon=100 xmit_hash_policy=layer2+3"

...Much more detail on available bonding modes and options can be found here.

One would then need to add additional IP addresses to the LACP bond interface of the NFS server. NFS clients will send all traffic from the same IP address so there's no need to add additional IP addresses on the NFS client.

The NFS client is then able to mount the same export multiple times using different IP addresses configured on the server. This will result in multiple mountpoints and the application / workload needs to be designed carefully to equally utilize all available mountpoints. In addition, care needs to be taken so that I/O to these mountpoints does not interfere with one another. To minimize this risk it is recommended that traffic to each mountpoint is performed to a dedicated subdirectory within the export. But if the client is able to evenly distribute parallel traffic across all mountpoints / subdirectories then the NFS client is able to utilize multiple Ethernet links in parallel — resulting is significantly enhanced throughput.

Using this configuration in internal testing with a pair of x3650-M3 servers the NFS throughput could be increased from ~850 MB/s to ~1500 MB/s. Note that data was written to and read from separate subdirectories per mountpoint in order to avoid any interference and prevent data corruption.

Other Options

As these tests show, changing the default Transmit Hash Policy from 'layer 2' to 'layer 2+3' can provide significant benefit in situations where few servers produce a great amount of data that needs to be transferred. However, the 'layer 2+3' policy requires multiple IP addresses on the storage server to fully utilize multiple physical links, and the application / workload needs to be designed carefully to span all available addresses.

Another available option is the 'layer 3+4' policy which uses upper layer protocol information such as TCP / UDP ports. This will allow for multiple connections to different ports to span different physical links. In most real-life deployments this provides significant additional benefit as it yields the best distribution of traffic for the majority of use cases. Even though the 'layer 3+4' policy introduces certain risk of out of order delivery of packets in very specific situations (most traffic will not meet this criteria), one should carefully test and consider the possible performance benefits.

Example with Spectrum Protect Client and Server on AIX

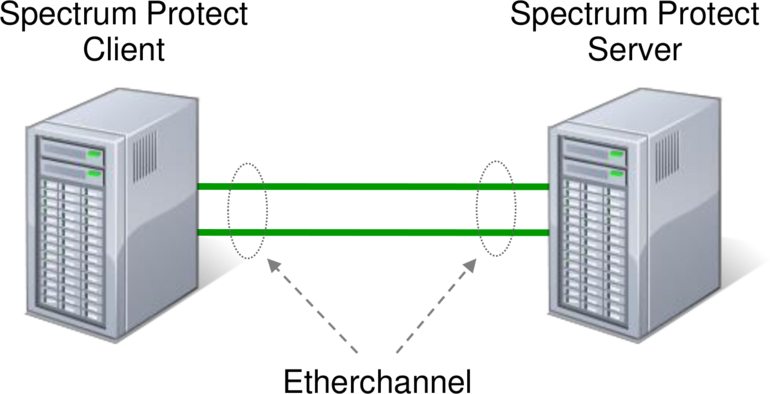

Imagine a setup with an IBM Spectrum Protect (TSM) backup client connected over 2 x 10 Gb Ethernet links to the network switch and a Spectrum Protect server that is also connected over 2 x 10 Gb Ethernet links to the same network switch. Both, the Spectrum Protect client and server run on AIX and have one TCP/IP address each. The following picture illustrates this setup:

In AIX two (or more) network interfaces can be aggregated to form a so called 'EtherChannel'. Even though the Spectrum Protect client is able to establish multiple connections (sessions) over a single IP address to the server in parallel, the default EtherChannel configuration will not be able to achieve more than 1 GB/s throughput regardless of the number of configured links. This is the approximate maximum speed for a single 10 GbE link. In order to use two (or more) Ethernet links in parallel according to the setup above it is important to configure the EtherChannel in AIX (using smitty) with the following parameters:

- Mode:

- 802.3ad

- Hash Mode:

- src_dst_port

Using this configuration in internal testing resulted in roughly 2 GB/s throughput backing up data from a single client to the server in a single session.

Summary

Multipathing is more complex in Ethernet / IP networks when compared to traditional Fibre Channel SANs. LACP is generally able to provide the same improvements in regards to availability and throughput, but it requires proper planning and increases operational complexity to fully leverage its benefits.

There are other solutions available to the problems described above, most of which utilize higher layer protocol features. An example is Multipath TCP which enables a single TCP connections to span multiple physical links. Multipath TCP is currently flagged as an experimental standard by the IETF and only very few commercial implementations exist as of today.

Another alternative are intelligent scale-out file systems which circumvent the bottleneck of individual point-to-point connections alltogether.